Difference between revisions of "Data Archival in KHIKA"

| (11 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

== Overview == | == Overview == | ||

| + | The purpose of this section is to provide KHIKA Administrators and Users, an understanding of the complete life cycle of data stored in KHIKA. In KHIKA, time series log data from data sources is segregated into one or more workspaces such that data from a distinct data source is typically stored in each workspace’s index. On receiving log data, KHIKA identifies the workspace associated with the data source and stores the data in its corresponding workspace's daily index. In other words, the data received today will be stored in today’s data index while the data received tomorrow will be stored in tomorrow’s data index. | ||

| − | + | Since log data combined over a period of time tends to becomes large (> few TBs) in size, in-order to maintain optimal application performance as well as to ensure prudent use of IT infrastructure and resources, KHIKA data is categorized into the following two types: | |

| − | + | *'''Online storage''' – The data that needs to readily searchable via KHIKA UI is refered as Online Data. The number of days for which data is maintained in online data storage is controlled via the Workspace level parameter called “TIME-TO-LIVE” or TTL which is configurable as per customer requirements. | |

| + | *'''Offline storage''' - The data older than the Time-To-Live period (which is not searchable via KHIKA UI) and needs to be retained for compliance or for long term retention is referred as the offline data. | ||

| − | + | == Data Archival Workflow == | |

| − | + | On receiving log data from data sources, KHIKA indexes and stores the data as online data. The online data is stored on a high performance storage device (viz. SSD, 15K RPM Disk)and the number of days for which online data needs to be retained is controlled via the Workspace level parameter called “TIME-TO-LIVE” or TTL which can be configured as per customer requirements. The default TTL or online data retention period for a workspace is 90 days. | |

| − | |||

| + | As the log data is received and stored in KHIKA, the Data Archival workflow automatically manages the life cycle of data by moving the data from online storage to offline storage based on the data retention period. Let us consider the below example to understand the Data Archival workflow which refers a workspace named "Linux Servers" with its retention period set to 30 days. | ||

| − | + | * Log data from 1st of March is stored in KHIKA for this workspace. This data would be stored in online data storage. | |

| + | * On 31st March, the 30 day retention period for data of 1st March has elapsed. | ||

| + | * The Data Archival workflow will take a snapshot of data index for 1st March from elasticsearch. The snapshot is compressed and copied it to the offline data storage for archival. | ||

| + | * The workflow computes checksum for the stored snapshot and maintains it in the KHIKA database - the checksum is used to verify the integrity of archived data. | ||

| + | * The data index for 1st March is then removed from elasticsearch. | ||

| + | * A similar process is executed on 1st April for archiving data of day 2nd March. | ||

| − | + | Once data moves to Offline storage, it is not searchable on the [[Discover or Search Data in KHIKA|Discover screen]]. However if older archived data is required for forensic or invetigation purposes, it can restored back to online storage. | |

| + | == For SaaS == | ||

| + | For SaaS Accounts, the availability of data archival functionality is dependent on the type of License as mentioned below: | ||

| + | === Basic License === | ||

| + | For basic accounts, data archival functionality is not available and data older than TTL will be automatically discarded after the TTL period is elapsed. | ||

| − | + | === Advanced License === | |

| + | For advanced customers, data archival functionality is enabled and the data will be automatically archived post TTL period and hence cease to be searchable from KHIKA UI. The customer can restore the archived data for limited time period for investigation purposes. | ||

| + | == For On-Premise == | ||

| + | In case of on-premise deployment, adequate storage based on an organization's data retention policy need to be allocated for both online as well as offline data. | ||

| − | + | KHIKA, which is shipped as a CentOS 7 Linux based VM can be managed/administered as a Linux Server. Before starting KHIKA, the Linux Server administrator will need to allocate sufficient storage for both online and offline data. The administator will need to mount the storage devices at the following location: | |

| + | === For Online Storage === | ||

| + | Online Storage Data needs to be a high performance storage device (viz. SSD, 15K RPM Disk) and should be mounted at the location "/opt/KHIKA/Data/Online" | ||

| + | === For Offline Storage === | ||

| + | Offline storage is typically a lower performance/cost effective storage device suitable for long term data retention purposes and should be mounted at the location "/opt/KHIKA/Data/Offine" | ||

| + | '''TIP: ''' | ||

| + | To accurately assess the storage requirement, you may wish to first [[How do I estimate my per day data?|check daily size of data in KHIKA]] then estimate storage space required based on the time period for which the data needs to be retained. Please note that the data retention period may depend on any compliance requirements of your organization. | ||

| − | + | == View Data Retention Settings == | |

| − | + | Select the desired workspace in the dropdown list on the top left side of the KHIKA UI. From the left pane, go to Configure and select Workspace tab. You can see the 'TTL ' or 'Data Retention Period' setting for the workspace in the 'Details' column in the Workspace Configuration table. | |

| − | |||

| + | [[File:Arch11.png|700px]] | ||

| − | |||

| + | == View Data Archival Status == | ||

| + | Select the desired workspace in the dropdown list on the top left side of the KHIKA UI. From the left pane, go to Configure and select Workspace tab. Click on Archival status icon for it. A pop up appears asking for "From" and "To" dates for date range for which archival status is required. Select desired dates and you shall see the archival status report as shown below: | ||

| − | + | [[File:Arch13.png|700px]] | |

| − | + | === Data Archival Status Report === | |

| + | [[File:Arch14.png|700px]] | ||

| Line 39: | Line 61: | ||

| − | |||

| + | [[Hardening Monitoring & Analysis|Previous]] | ||

| − | + | Go to the next section to know more about the anti data breach feature in KHIKA - [[File Integrity Monitoring]] | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

Latest revision as of 11:47, 14 June 2019

Contents

Overview

The purpose of this section is to provide KHIKA Administrators and Users, an understanding of the complete life cycle of data stored in KHIKA. In KHIKA, time series log data from data sources is segregated into one or more workspaces such that data from a distinct data source is typically stored in each workspace’s index. On receiving log data, KHIKA identifies the workspace associated with the data source and stores the data in its corresponding workspace's daily index. In other words, the data received today will be stored in today’s data index while the data received tomorrow will be stored in tomorrow’s data index.

Since log data combined over a period of time tends to becomes large (> few TBs) in size, in-order to maintain optimal application performance as well as to ensure prudent use of IT infrastructure and resources, KHIKA data is categorized into the following two types:

- Online storage – The data that needs to readily searchable via KHIKA UI is refered as Online Data. The number of days for which data is maintained in online data storage is controlled via the Workspace level parameter called “TIME-TO-LIVE” or TTL which is configurable as per customer requirements.

- Offline storage - The data older than the Time-To-Live period (which is not searchable via KHIKA UI) and needs to be retained for compliance or for long term retention is referred as the offline data.

Data Archival Workflow

On receiving log data from data sources, KHIKA indexes and stores the data as online data. The online data is stored on a high performance storage device (viz. SSD, 15K RPM Disk)and the number of days for which online data needs to be retained is controlled via the Workspace level parameter called “TIME-TO-LIVE” or TTL which can be configured as per customer requirements. The default TTL or online data retention period for a workspace is 90 days.

As the log data is received and stored in KHIKA, the Data Archival workflow automatically manages the life cycle of data by moving the data from online storage to offline storage based on the data retention period. Let us consider the below example to understand the Data Archival workflow which refers a workspace named "Linux Servers" with its retention period set to 30 days.

- Log data from 1st of March is stored in KHIKA for this workspace. This data would be stored in online data storage.

- On 31st March, the 30 day retention period for data of 1st March has elapsed.

- The Data Archival workflow will take a snapshot of data index for 1st March from elasticsearch. The snapshot is compressed and copied it to the offline data storage for archival.

- The workflow computes checksum for the stored snapshot and maintains it in the KHIKA database - the checksum is used to verify the integrity of archived data.

- The data index for 1st March is then removed from elasticsearch.

- A similar process is executed on 1st April for archiving data of day 2nd March.

Once data moves to Offline storage, it is not searchable on the Discover screen. However if older archived data is required for forensic or invetigation purposes, it can restored back to online storage.

For SaaS

For SaaS Accounts, the availability of data archival functionality is dependent on the type of License as mentioned below:

Basic License

For basic accounts, data archival functionality is not available and data older than TTL will be automatically discarded after the TTL period is elapsed.

Advanced License

For advanced customers, data archival functionality is enabled and the data will be automatically archived post TTL period and hence cease to be searchable from KHIKA UI. The customer can restore the archived data for limited time period for investigation purposes.

For On-Premise

In case of on-premise deployment, adequate storage based on an organization's data retention policy need to be allocated for both online as well as offline data.

KHIKA, which is shipped as a CentOS 7 Linux based VM can be managed/administered as a Linux Server. Before starting KHIKA, the Linux Server administrator will need to allocate sufficient storage for both online and offline data. The administator will need to mount the storage devices at the following location:

For Online Storage

Online Storage Data needs to be a high performance storage device (viz. SSD, 15K RPM Disk) and should be mounted at the location "/opt/KHIKA/Data/Online"

For Offline Storage

Offline storage is typically a lower performance/cost effective storage device suitable for long term data retention purposes and should be mounted at the location "/opt/KHIKA/Data/Offine"

TIP: To accurately assess the storage requirement, you may wish to first check daily size of data in KHIKA then estimate storage space required based on the time period for which the data needs to be retained. Please note that the data retention period may depend on any compliance requirements of your organization.

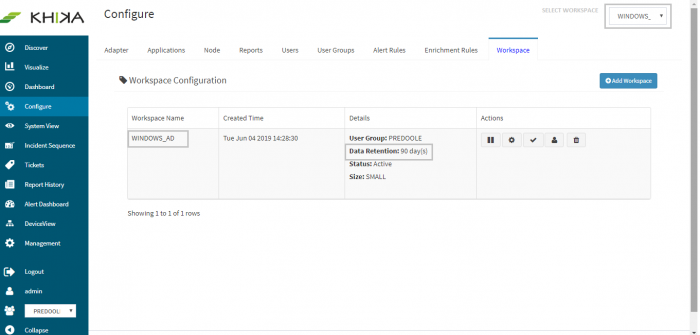

View Data Retention Settings

Select the desired workspace in the dropdown list on the top left side of the KHIKA UI. From the left pane, go to Configure and select Workspace tab. You can see the 'TTL ' or 'Data Retention Period' setting for the workspace in the 'Details' column in the Workspace Configuration table.

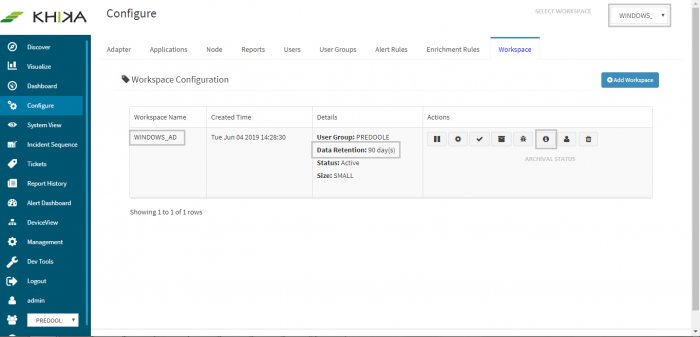

View Data Archival Status

Select the desired workspace in the dropdown list on the top left side of the KHIKA UI. From the left pane, go to Configure and select Workspace tab. Click on Archival status icon for it. A pop up appears asking for "From" and "To" dates for date range for which archival status is required. Select desired dates and you shall see the archival status report as shown below:

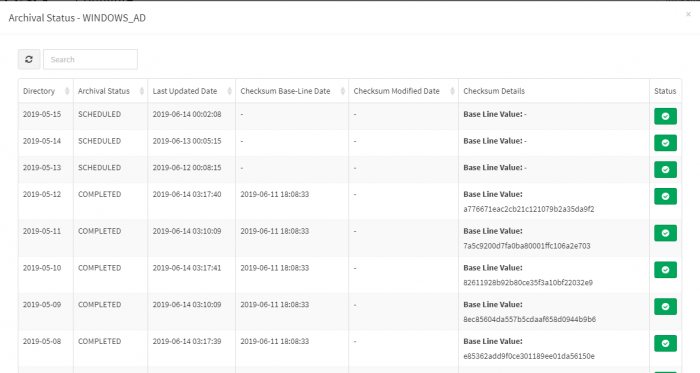

Data Archival Status Report

The archival status report shows the status of the archival tasks for individual day-wise data directories. Please note that the report also shows the checksum values for archived data directories.

Go to the next section to know more about the anti data breach feature in KHIKA - File Integrity Monitoring